Md. Badsha Biswas

Skills & Expertise

Research Interests

Programming

Agentic AI & Orchestration

Libraries & Frameworks

Data & Pipelines

MLOps/DevOps

About Me

PhD Student & Researcher

Actively Seeking Summer Internship 2026

Open to exciting opportunities in Machine Learning, NLP, and AI research

I am a PhD student in Computer Science at George Mason University, specializing in Machine Learning, Deep Learning, Natural Language Processing, LLMs, Reinforcement Learning, Multimodal Reasoning, and Generative AI.

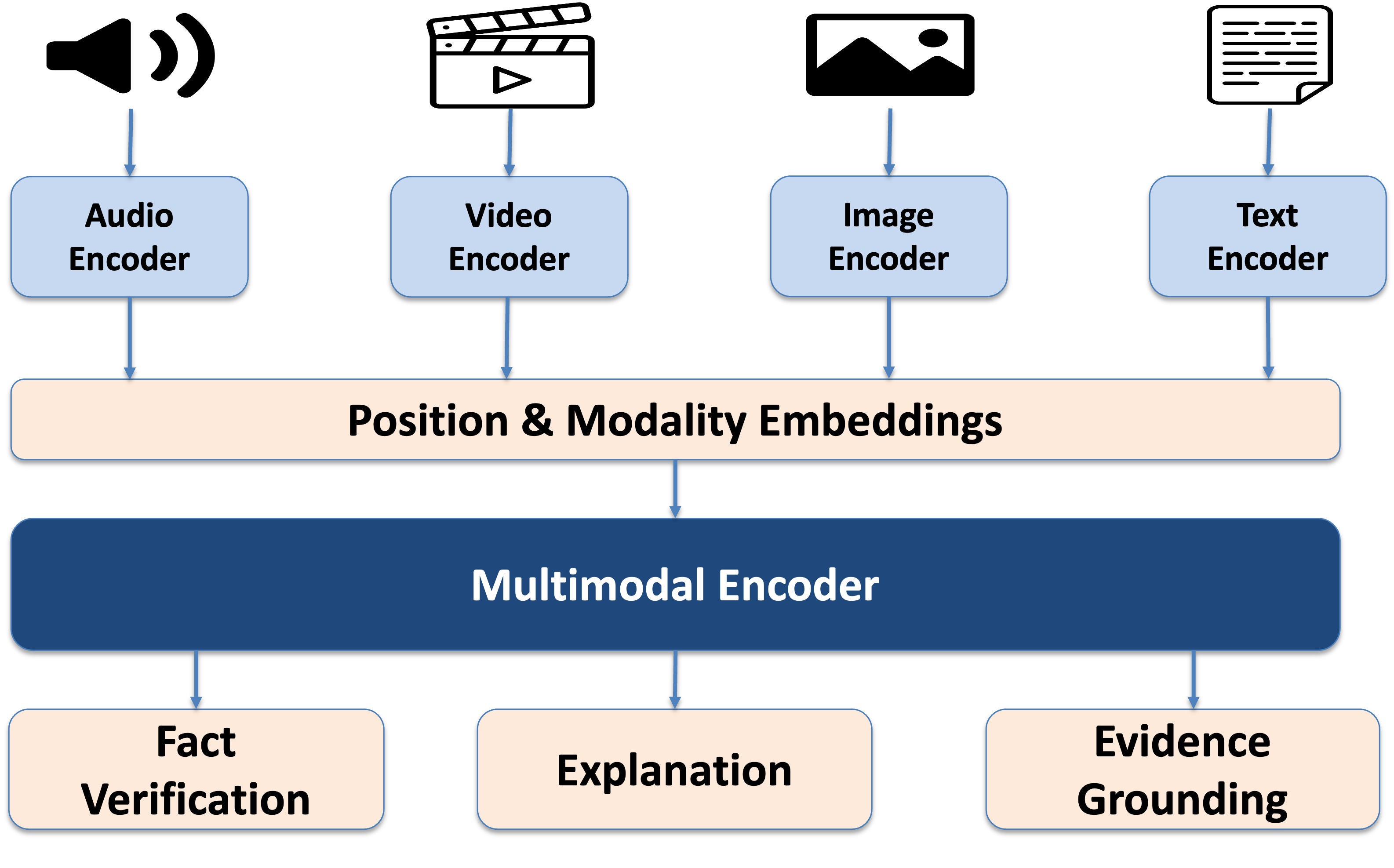

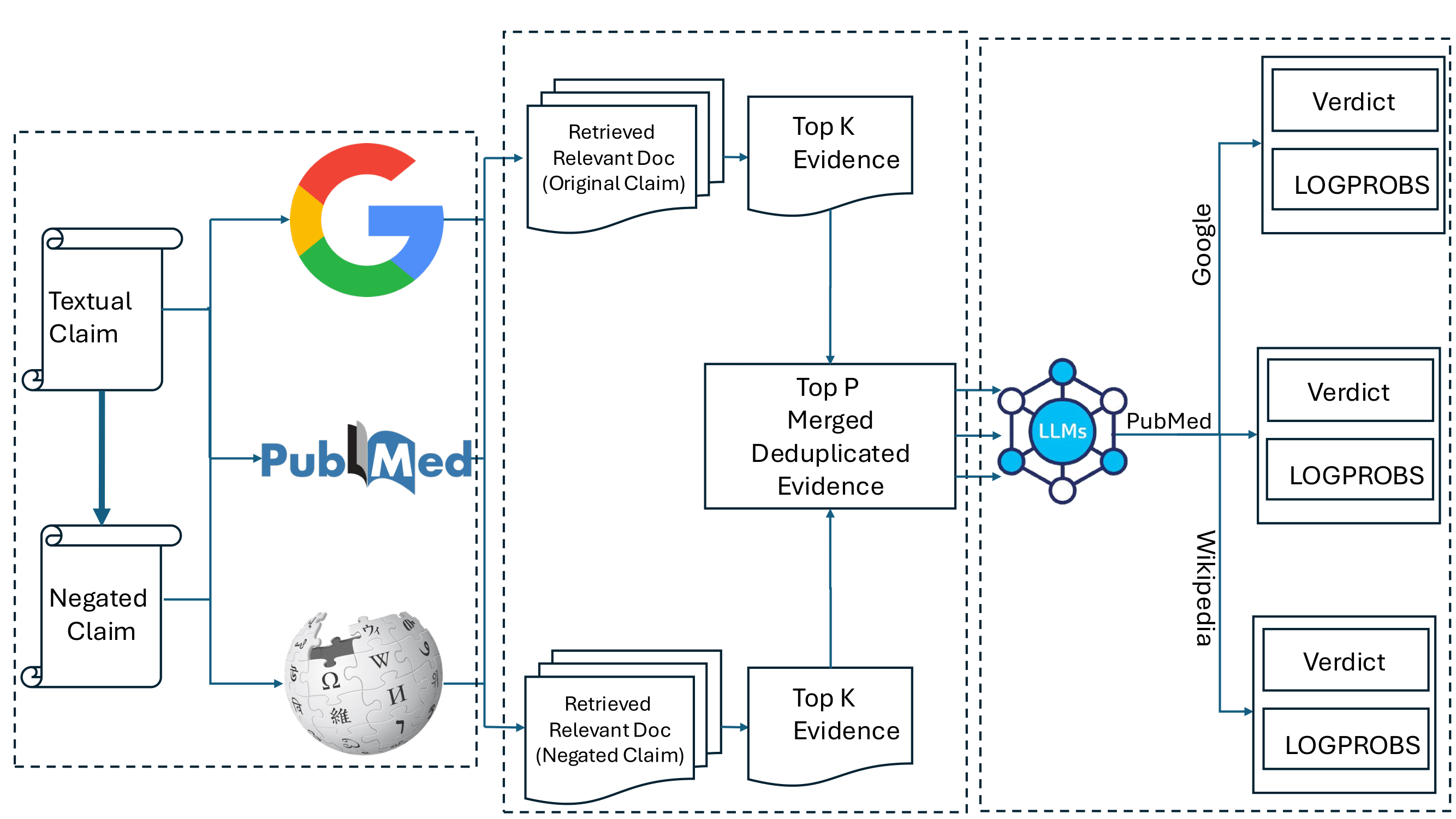

My research focuses on building reliable, evidence grounded LLM systems especially Retrieval-Augmented Generation (RAG) and agentic AI pipelines that can plan, retrieve, verify, and write with transparency. I specialize in LLMs (RAG, Agentic AI), reinforcement learning, multimodal reasoning, and generative AI , and I work with Dr. Özlem Uzuner on methods that reduce hallucinations by improving retrieval quality and handling agreement vs. disagreement across evidence sources. My recent work includes a multi-source, retrieval-based claim verification direction that explicitly models source-level disagreement using LLMs, along with applied research on LLM prompting for health-related social media understanding aiming to make LLM outputs not only fluent, but auditable and trustworthy.

Recently, I built a multimodal RAG + Deep Research system that achieved 1.5×–1.7× faster responses, 35–40% efficiency gains, and 98% reduction in reasoning time, and I developed a multi-agent writing workflow that reduced first-draft time by 95%. Earlier, I worked as a Software Engineer at BJIT (Tokyo), where I built a personalized recommender/advertising system that reduced manual campaign tuning by 70%.

I am optimistic about making a beautiful world with Science and Technology. I am actively looking for research opportunities and collaborations in my areas of interest and would be delighted to connect with fellow researchers and potential collaborators.

"If an elderly but distinguished scientist says that something is possible, he is almost certainly right; but if he says that it is impossible, he is very probably wrong."

Publications

Data Augmentation for Classification of Negative Pregnancy Outcomes in Imbalanced Data

arxivMd. Badsha Biswas

arXiv preprint arXiv:2512.22732, 2025

Quantitative Currency Evaluation in Low-Resource Settings through Pattern Analysis to Assist Visually Impaired Users

ICDMMSI Ovi, M Hossain, Md. Badsha Biswas

arXiv preprint arXiv:2509.06331, 2025

Mason NLP-GRP at# SMM4H-HeaRD 2025: Prompting Large Language Models to Detect Dementia Family Caregivers

AAAIMd. Badsha Biswas, Ozlem Uzuner

Training 4523 (2201), 6724, 2025

Research Projects

Awards & Honors

Summer Research Award — George Mason University

Recognition for summer research contribution

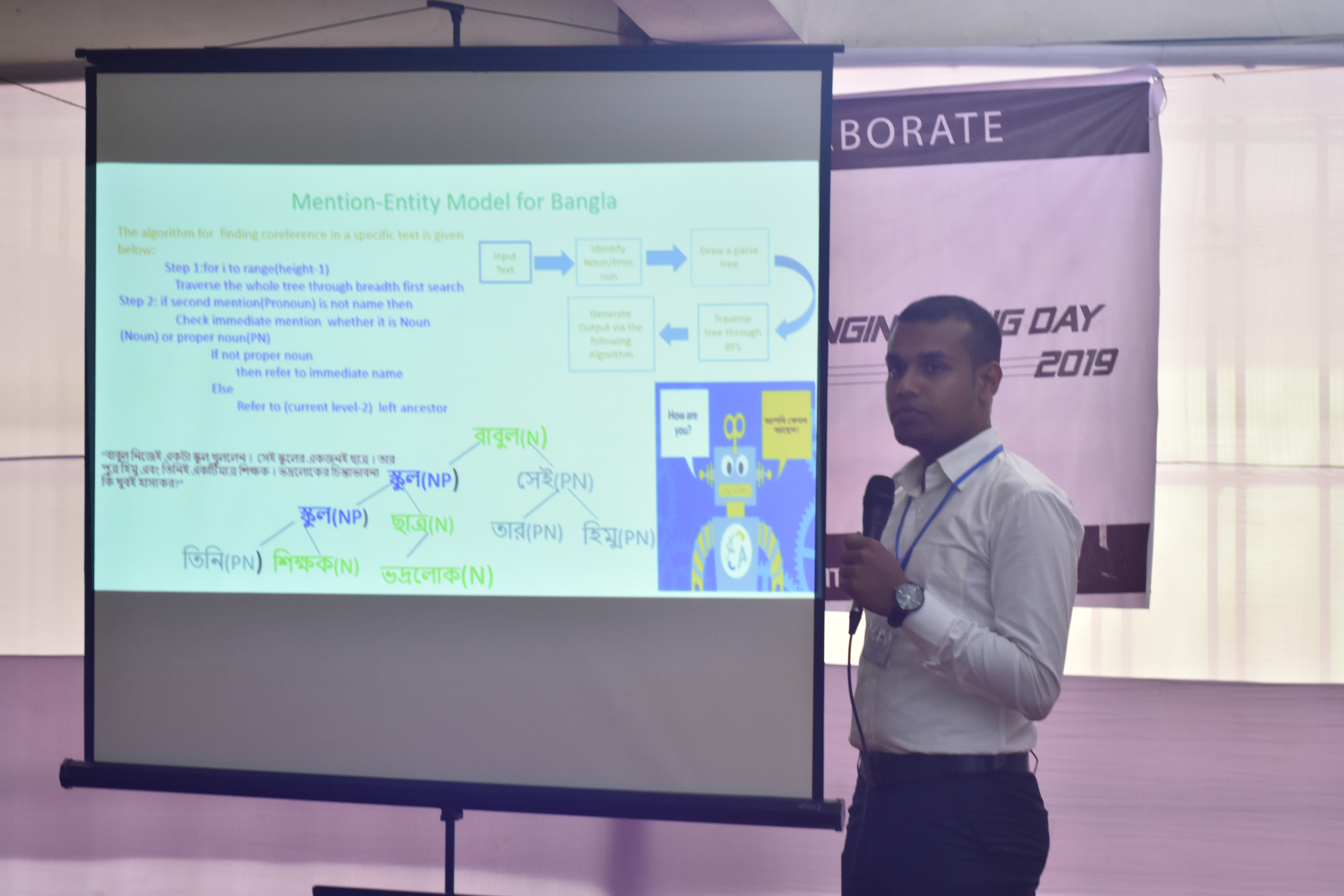

3rd Position — 3MT Thesis, Engineering Day

University of Chittagong

Honorary Award — Prothom Alo & Teletalk

Awarded for SSC examination performance

Scholarship — Jessore Education Board

Merit scholarship awarded by the board

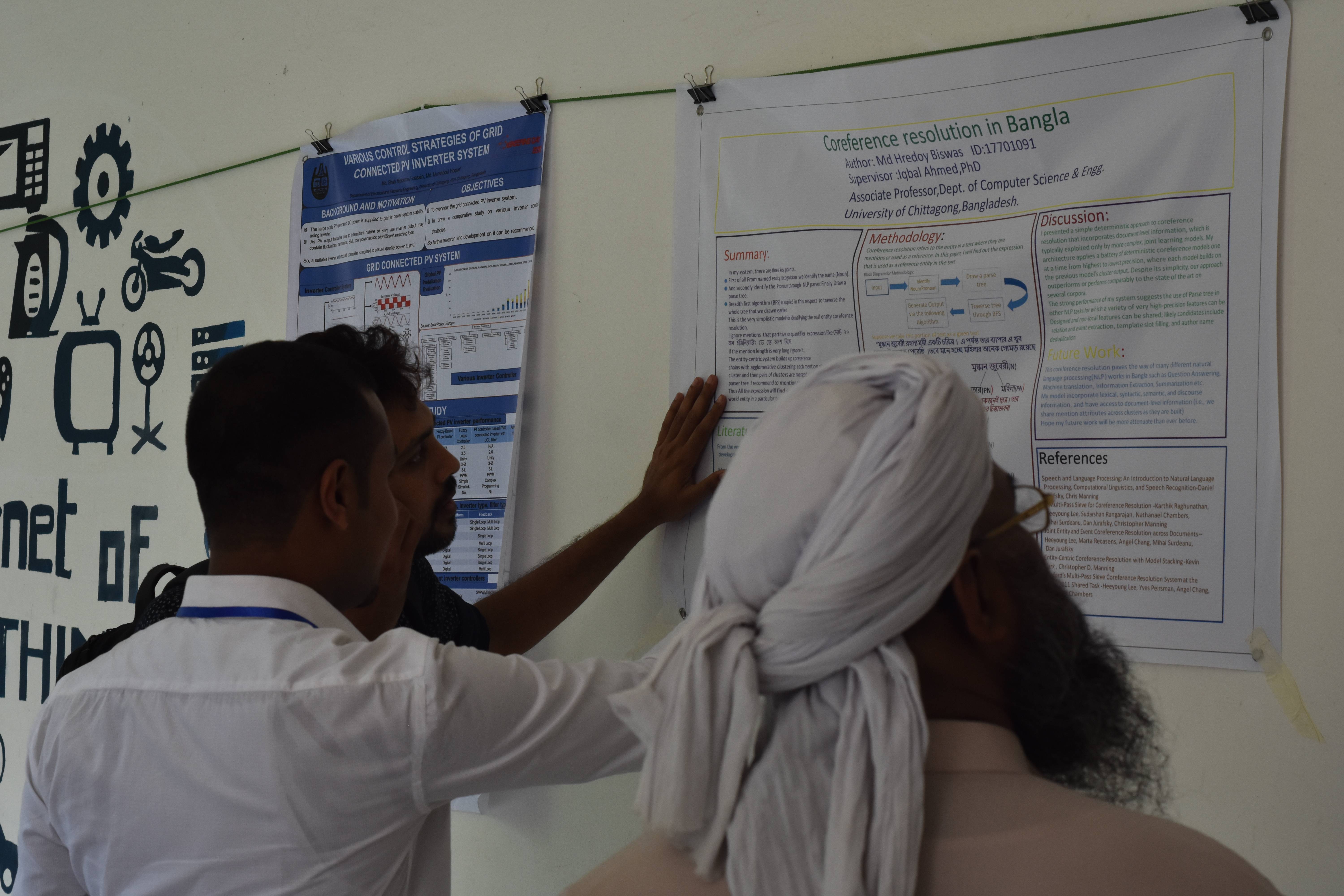

Proud Moments

Service & Activities

Academic Service

Reviewer

Conference and journal reviews across multiple venues in Computer Science

Teaching

Graduate Teaching Assistant responsibilities at George Mason University

Mentoring

Undergraduate research mentoring and guidance in Computer Science projects

Professional Activities

Conference Organization

Student volunteer at various international conferences in Computer Science and AI

Community Service

Outreach activities promoting Computer Science education and technology literacy

Education

Doctor of Philosophy (PhD) in Computer Science

George Mason University, Fairfax, VA

Research Focus: Machine Learning, Deep Learning, Natural Language Processing, LLMs (RAG, Agentic AI), Reinforcement Learning, Multimodal Reasoning, Generative AI

MS in Computer Science — Machine Learning Concentration

George Mason University, Fairfax, VA

Coursework & Focus: Machine Learning, Deep Learning, Statistical Methods, Applied NLP, Reinforcement Learning

Bachelor of Science (B.Sc.) in Computer Science & Engineering

University of Chittagong, Bangladesh

Professional Experience

Graduate Teaching Assistant

George Mason University — Virginia, United States

Courses: CS 211: Object-Oriented Programming; CS 310: Data Structures

- Led lab sessions and office hours for undergraduate courses; assisted in grading assignments and creating assignments and exam materials.

- Tutored students in Data Structures and Java, improving average assignment scores and helping reduce office-hour wait times.

- Contributed to course material updates and automated marking scripts.

Graduate Research Assistant

George Mason University — Fairfax, Virginia, United States

Worked on key management and network security research; contributed to experiments and codebases.

- Implemented prototype systems for key management and secure communication.

- Assisted in data collection, experimental evaluation, and documentation for publications.

Machine Learning Research Intern

Cogniaide, TN, USA

Tech Stack: LangGraph, LangChain, Python, PyTorch, FastAPI, MongoDB, Weaviate, Docker, Kubernetes (GKE)

- Engineered and coordinated a multimodal RAG QA chat system and Deep Research with parallel tool execution for 1.5x–1.7x faster responses and 35–40% efficiency gains; cut reasoning time by 98%.

- Designed and developed a multi-agent writing system with LangGraph and LangChain, improving first-draft writing time by 95%.

- Hardened confidential workflows by deploying privately owned Azure models behind private endpoints and role-based access.

Software Engineer

BJIT Group, Tokyo, Japan

Tech Stack: Spring Boot, JPA, JSP, Amazon EC2, Spiral DB, REST APIs, Python, JIRA, Redmine, JWT

- Engineered a personalized advertising and recommender system for a doctor–patient portal using user signals and session context, improving relevance and reducing manual campaign tuning by 70%.

- Designed and organized the end-to-end data pipeline from Spiral DB to serving layer, developed ETL, computed features, and integrated ML stages feeding REST APIs; deployed on Amazon EC2.

Software Engineer Intern

BJIT Group, Tokyo, Japan

Tech Stack: Spring Boot, Hibernate (JPA), JSP, REST APIs, React, JavaScript, MySQL, Node.js

- Developed a training-management platform for BJIT Academy with real-time messaging and SNS to streamline trainer–trainee communication.

Latest News

- [May 2025] Started as Machine Learning Research Intern at Cogniaide, TN, USA.

- [Dec 2024] Currently pursuing PhD in Computer Science at George Mason University.

- [Aug 2022] Completed B.Sc. in Computer Science & Engineering from University of Chittagong, Bangladesh.

- [Aug 2022] Worked as Software Engineer at BJIT Group.

- [2021] Presented poster on "Coreference Resolution for Bangla" in NLP.

- [2021] Worked on TREC-IS project under supervision of Dr. Abu Nowshed Chy.

Get In Touch

Office Location

George Mason University

Fairfax, VA, USA

Research Interests

Let's Collaborate!

Feel free to reach out if you're interested in collaboration or have any questions about my research. I'm always excited to discuss new ideas and opportunities!